When was the last time a month passed without a new AI-related scandal in gaming industry? Art used without authorization, massive layoffs caused by AI replacing humans, and numerous times when companies showcase using AI in games, when it doesn’t bring any clear value.

Most players are highly critical of that behaviour, and developers at large share this opinion – more than 50% of industry professionals think that AI has a negative impact on gaming. So, why is it spreading despite all of this? It’s not just the hype cycle. We believe that the secret lies in how AI is used – not to replace people and create low-quality gaming experiments, but as a working tool, effective and completely ethical. And to start with it, let’s first look at the history of AI in game design.

What Is AI in Games? Not Just NPC Logic

For years, AI in games meant one thing – enemy behavior. Pathfinding, state machines, scripted reactions. If an NPC could take cover or flank the player, it was considered advanced.

That definition no longer holds. Today, AI can do a lot more, penetrating all parts of game development process. The focus has shifted from “How smart is this enemy?” to “How intelligently does this game respond to the player?”

According to Google Cloud survey, 87% of video game developers use AI agents. It doesn’t mean they let AI design games instead of them. Instead, the tools can help them save time on boring tasks and do their main job faster.

Game design agencies often have strong creative direction and gameplay expertise. What they may lack is the infrastructure and data-layer architecture needed to design intelligent systems that scale. Agencies specializing in AI don’t replace designers – they extend them. They build frameworks that allow designers to move from handcrafted scripts to adaptive systems.

Smarter experiences are not about making games harder. They are about making games more responsive.

A well-designed AI system can:

- detect when a player is disengaging

- adjust challenge curves dynamically

- personalize rewards without breaking economy balance

- identify friction before churn happens

This changes the design philosophy itself. Instead of shipping static content, teams design systems that evolve in response to player behavior.

And this is where the real transformation happens. AI in modern game design is less about spectacle and more about structure. It’s not the visible trick. It’s the invisible layer that makes everything feel intentional.

In the next section, we’ll look at how this evolution happened – from rigid scripting to adaptive design systems that learn and respond over time.

From Rule-Based Logic to Adaptive Systems: The Real Evolution of Game AI

What the industry has historically called “AI” in games was not artificial intelligence in the machine learning sense. It was deterministic decision logic designed to simulate intelligence.

If you go back to the 80s and early 90s, what we called “AI” was mostly clever rule design.

Take Pac-Man. The ghosts didn’t think. Each one followed a specific movement pattern coded directly into the game. They felt different because their rules were different. That was the trick. No learning, no adaptation — just tightly written behavior.

Looking at this from now, we wouldn’t even call it AI. In 2020, NVIDIA researchers created an AI model that can generate a fully functional version of Pac-Man without an underlying game engine. They did it by training the model on 50,000 episodes of the game – no rules.

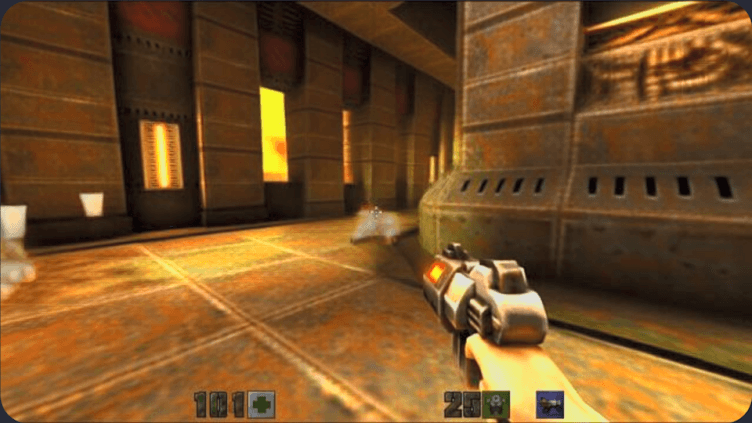

Jump to the mid-90s, legendary Quake. This game brought quite some innovation in the world of game development, and here are the mechanics that bring us closer to modern AI. Enemies could switch states, such as patrol, chase, attack, retreat, depending on what the player did. It looked reactive, and at that time, no other game had those dynamics. But everything they did was still defined ahead of time. The system didn’t change. It executed.

Image credit: Microsoft

Curiously, in 2025, Microsoft made a thing similar to what NVIDIA did with Pac-Man five years earlier. They released a new Copilot feature that recreates Quake 2 in real time using AI as it’s being played. But this time, it wasn’t perceived as an interesting experiment. Players were largely frustrated by how company presented a game that was just simply worse as something exciting. That’s one of those cases when AI was used for no apparent reason than to “show off what AI can do”. But let’s get back to the history again.

By the early 2000s, AAA game companies developing titles such as F.E.A.R. raised the bar again. Enemies seemed coordinated. They took cover, flanked, shouted to each other. Players described them as “smart.” In reality, these behaviors were driven by structured decision trees. Complex, yes. Adaptive, no. And yet, 20 years later, people on Reddit claim that it was the best AI in FPS games ever.

Around that same era, developers started experimenting with utility-based systems. To make NPCs’ reactions smarter and more variable, designers assigned scores to possible actions. The system would evaluate the situation and pick the highest-scoring option. This allowed for more variability, but the logic still depended on handcrafted weights.

All of these approaches shared one trait:

They did not learn from player behavior.

They executed predefined logic.

And the secret to the best AI was about how it all was executed, not just smart technology, but overall team professionalism and dedication.

The real shift began in the 2010s, when large-scale telemetry became standard in online and live-service games. Telemetry is essentially the automatic collection of gameplay data. Every time a player completes a level, quits mid-session, fails a boss fight three times, purchases an item, or spends five minutes stuck in one area, that information can be recorded. Not personal data – but behavioral signals.

Instead of guessing how players behave, game development companies could now see patterns at scale. They could measure where frustration spikes, where engagement drops, and how progression actually unfolds in real play.

Studios started collecting behavioral data at scale:

- session length

- failure frequency

- progression pacing

- monetization interaction

- churn indicators

This data layer made something new possible — adaptive systems.

Instead of asking:

“What should the NPC do in this scenario?”

Designers began asking:

“How should the system respond to this player?”

Dynamic difficulty adjustment, live economy balancing, and personalized event tuning emerged from this shift. The AI layer moved from character behavior to system intelligence.

Later on, systems started affecting more than moment-to-moment combat. In Middle-earth: Shadow of Mordor, the Nemesis System tracked how you interacted with specific enemies. Orcs stopped being a uniform mass of objects to fight. If you humiliated or escaped one, he might remember it the next time you met. The hierarchy shifted based on those encounters.

Image credit: thegamer.com

It wasn’t machine learning. The rules were still predefined. But the structure allowed outcomes to feel personal and unpredictable. That was the turning point – not smarter enemies, but systems that reshaped the world around the player’s actions. The Nemesis System was such a successful and innovative game mechanic that it was patented in 2021 (which unfortunately limited its use in other games).

Today, with machine learning integration becoming more common in production pipelines, the distinction is clearer:

Rule-based AI follows instructions written in advance.

Adaptive AI looks at player behavior and modifies systems over time.

The terminology has changed a lot in recent years, and so has the role of agencies building these systems.

Where AI Is Most Commonly Used in Games Today

The fact that the vast majority of game developers use AI nowadays probably isn`t surprising to you. The question is, where exactly and how is it used? Nobody wants to think that their favourite game characters were generated by AI, but knowing that the game can be delivered in 1 year instead of waiting for 5 years (thanks to AI) – that thing people surely wouldn’t mind.

Here are the most common areas where AI is actively used today.

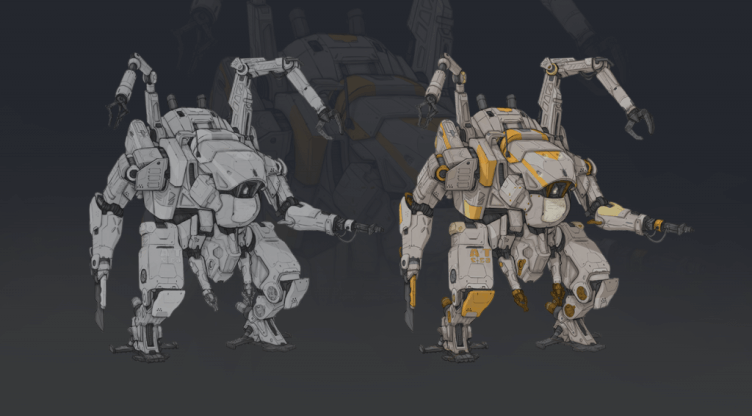

1. Production and Asset Creation

This is currently the largest area of adoption.

AI tools are widely used for:

- concept iteration and visual exploration

- texture upscaling and enhancement

- animation cleanup and retargeting

- voice prototyping and localization support

- code assistance

Game art outsourcing studios are not replacing the creative process with generative AI. They are accelerating iteration. Instead of spending days generating multiple visual variations, teams can test directions faster and refine manually afterward.

BallBuds. Game art from Kevuru Games portfolio enhanced with AI tools

For example, texture upscaling tools based on neural networks are commonly used to remaster older titles. NPC voice prototyping with AI-generated speech allows narrative teams to test pacing before final recording. The key pattern here is optimization, not automation.

2. Player Analytics and Retention Modeling

Live-service and mobile games rely heavily on behavioral data. AI models are used to:

- predict churn probability

- segment players by engagement patterns

- optimize reward timing

- personalize event difficulty

- recommend in-game offers

This is especially common in free-to-play ecosystems. For example, many mobile strategy and RPG titles dynamically tune offers and event rewards based on player progression data. While companies rarely publish full technical details, this kind of predictive modeling has become industry standard in mobile analytics platforms.

3. Dynamic Difficulty Adjustment

Adaptive difficulty has existed for decades, but modern systems are more data-driven. Instead of switching between predefined difficulty modes, AI-based approaches can monitor:

- reaction times

- failure frequency

- resource depletion rates

- time spent per encounter

The system can then subtly adjust enemy health, spawn density, or loot drops.

Another good use of AI is matchmaking in games where several online players have to be assigned to play with each other. It works perfectly with the task of balancing skill levels. While not machine learning in every case, ranking and performance prediction systems are increasingly data-informed and continuously recalibrated. The goal is not to make the game easier — it is to maintain engagement.

4. Procedural Generation and Content Scaling

AI is also used to support procedural world-building. Earlier procedural systems relied on mathematical noise functions and rule combinations. Today, AI-assisted generation helps with:

- terrain generation

- quest variation

- dialogue expansion

- environmental detail enhancement

Games like No Man’s Sky rely heavily on procedural systems to create large-scale worlds. While not purely machine-learning driven, the principle remains the same: algorithmic systems extend content beyond manual capacity. Modern AI tools are now being layered on top of these systems to add variety and reduce repetition.

Here is another example, very different from No Man’s Sky: Candy Crush Saga, a mobile matching game. Candy Crush has an extra high income while having a rather small team working on it. The game releases lots of new levels regularly, and the creation of these levels is now the AI’s job. If you are curious to learn more numbers and secrets behind the success of Candy Crush, read our article.

5. Testing and Quality Assurance

One of the least visible but most practical uses of AI is automated testing. AI agents can simulate player behavior to:

- identify level-breaking paths

- stress-test economies

- detect balance exploits

- uncover collision bugs

In large-scale multiplayer environments, this reduces manual QA workload and shortens iteration cycles. This area is growing quickly because it produces measurable cost savings.

The Pattern Across All Categories

Across production, analytics, balancing, and testing, one pattern repeats: AI is used to increase speed, scale, and precision.

It is rarely the creative decision-maker.

It is increasingly the optimization layer.

Case Scenario: Adaptive Difficulty in a Mid-Core Action Game

Imagine a mid-core action game with skill-based combat and progression tied to gear upgrades. Something like Resident Evil 4 or Hades. When the main game development stages are over, it needs to be perfected before the release. During beta testing, the team notices a familiar pattern.

New players drop off after the third boss encounter.

Experienced players move through early levels too quickly and disengage before mid-game systems unfold.

The traditional solution would be to tweak health values, adjust damage numbers, and rebalance difficulty tiers manually. That works, but it treats the audience as a single group. Instead, the team implements a lightweight adaptive system.

First, telemetry is structured to capture meaningful signals:

- number of failed attempts per encounter

- time-to-clear per level

- healing item usage rate

- reaction window timing

- upgrade frequency

Within weeks, patterns emerge. Players struggling with boss mechanics show a specific behavioral signature: repeated short attempts, low healing consumption, and rapid retries.

Rather than lowering difficulty globally, the system adjusts selectively:

- slightly extends parry timing windows for flagged players

- reduces secondary enemy spawn frequency during boss fights

- increases early gear drop probability

The changes are subtle. Most players never notice them directly. But frustration curves flatten. Retention improves.

Meanwhile, high-skill players trigger the opposite response. Enemy aggression increases marginally. Reward pacing slows to maintain challenge.

This isn’t machine learning in the cinematic sense. It’s structured data interpretation connected to controlled design levers.

The important part is architecture. Designers define what signals matter, what thresholds trigger adjustments, what parameters are safe to modify, and so on.

AI does not “decide” creatively. It monitors and activates predefined flexibility ranges. The result is not a different game for each player. It’s a game that responds within boundaries set by the design team.

And that is typically where agencies come in – designing the telemetry layer, building the response framework, and ensuring that adaptation enhances experience rather than destabilizing balance.

Conclusion. What the Next Few Years Look Like for AI in Game Development

The direction is pretty clear: AI use will keep expanding, but most of it will sit behind the curtain. Not “games made by AI,” but games made faster, tuned more precisely, and operated with more data-awareness.

What the data says right now:

- AI adoption in dev workflows is already mainstream. Google Cloud’s Harris Poll study reported 90% of surveyed developers were integrating AI into workflows, and 95% said it reduces repetitive tasks.

- Unity’s industry report points in the same direction, with broad adoption of AI tools in select workflows and a focus on speed and efficiency rather than replacement.

- At the same time, player-facing use is still limited. A recent GDC survey summary reported that only a small share of developers are applying genAI directly to player-facing features, even while usage for research, brainstorming, and admin work is common.

- Sentiment is mixed and getting tougher. The same GDC reporting shows that more than half of developers view genAI as having a negative impact on the industry (but they still use it anyway).

Where this is heading, based on those signals:

- more “AI as copilot” in production – faster iteration, faster prototyping, faster localization, faster QA, more tooling around pipelines

- more “AI as operations layer” in live games – balancing, tuning, moderation, and personalization powered by telemetry and guardrails (not freeform generation)

- more governance, provenance, and rights management – adoption continues, but teams will be stricter about what data is used, what’s allowed in the pipeline, and what ships to players

A useful way to phrase the future without hype:

AI will push the industry toward smarter systems and faster production cycles, but the winning teams will treat it like infrastructure. Controlled, measurable, and aligned with art direction and design intent.

Our game artists and developers at Kevuru Games always try to find the balance between using new technologies to improve and optimize their work and adopting new technologies for the sake of it. If you have to look for specific uses for AI, you probably don’t need to.

Here is what our 3D game development expert, Olga Andrianova, says:

Using models trained on other artists’ work is a harsh no. But if you manage to save hours of time on polishing little details thanks to AI tools, there is no shame in using them.

In a recent project, using AI tools in the pipeline helped deliver art 40% faster than usual – all this without generating images. Curious to find out how we did it? Read on here.