When people talk about AI in 3D art, the conversation often jumps to extremes. On one side, there’s the idea that AI will replace artists entirely. On the other, that it will magically generate production-ready characters with minimal input. Neither reflects how modern pipelines actually work.

In practice, AI isn’t redefining how characters are created – it’s shifting where time and effort go inside the pipeline. The key creative decisions still stay with artists, while AI is gradually taking over parts of the technical work around them. On its own, the shift feels small. But across dozens or hundreds of assets, it starts to change how production actually moves.

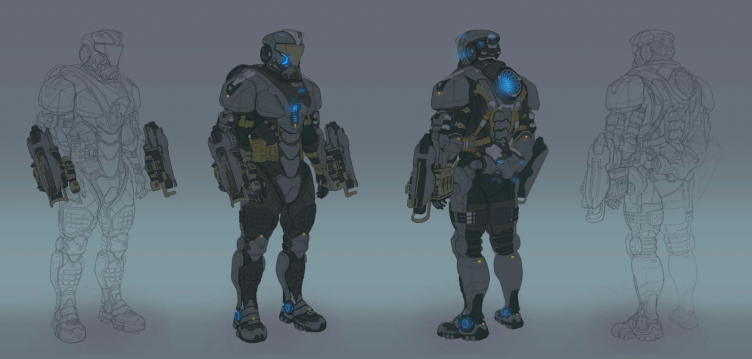

To see where that change really happens, it makes more sense to look at the process, not the final result. A modern 3D character is not a single task – it is a sequence of interdependent steps, each with its own constraints, tools, and risks. Some of these steps require artistic judgment. Others require precision, consistency, and time.

AI does not affect all stages equally. It tends to integrate where the work is repetitive and structured, not where creative intent is defined. That distinction shapes how studios adopt these tools – and why the role of the artist is evolving, but not disappearing.

What a Character Pipeline Really Looks Like

A finished 3D character is the result of multiple tightly connected stages. Each one has its own constraints, tools, and failure points. And importantly, not all of them are equally creative.

Here’s a simplified breakdown of a typical production pipeline:

| Stage | Description | Time Share (Approx) | Nature of Work |

| Concept / Design | Shape language, proportions, identity | 10–15% | Creative |

| High-poly sculpt | Detailed form in ZBrush or similar | 20–25% | Creative |

| Retopology | Clean topology for animation | 10–15% | Technical |

| UV mapping | Preparing surfaces for texturing | 5–10% | Technical |

| Texturing | Materials, surface detail | 15–20% | Mixed |

| Rigging prep | Mesh readiness for animation | 5–10% | Technical |

| Engine integration | LODs, shaders, optimization | 10–15% | Technical |

What stands out is this: a significant portion of time is spent on technical preparation, not on creative decisions.

This is where most production friction lives. Not in sculpting a character’s face. But in making sure that face behaves correctly under lighting, deforms properly in animation, and doesn’t break performance budgets.

Where AI Actually Fits

This is where AI starts to matter in a more practical way. Not across the whole pipeline, but in the parts that are repetitive and don’t really benefit from being done manually every time.

In most cases, that means tasks like retopology, UVs, basic texture passes, or LOD setup. These steps are necessary, but they’re not where creative decisions happen. They’re about getting the asset into a usable, stable state.

Take retopology as an example. It has to be done well, but it’s also predictable work. AI tools can speed up the initial pass or reduce the amount of cleanup needed. The same goes for UVs – instead of laying everything out from scratch, artists often start from an automated result and adjust it.

Texturing follows a similar pattern. Generating a base is faster than building everything manually, but the final look still depends on how that base is refined. Material behavior, wear, consistency – those things still need to be controlled by hand.

LOD creation is another good example. It’s required for performance, but rarely something artists want to spend time on. Automating parts of it doesn’t change the result, it just removes repetition.

None of this replaces the process. It just reduces how much time is spent on the parts that don’t require judgment. And once that reduction repeats across a full production, it starts to make a real difference.

Where AI Doesn’t Help

There are still parts of the pipeline where AI has very little impact. These are the stages where decisions matter more than execution, and where small changes can significantly affect how a character is perceived.

Silhouette is one of them. The overall shape of a character – how it reads from a distance, how recognizable it is in motion – can’t be meaningfully automated. The same applies to proportions. Even slight adjustments can change whether something feels believable or not, especially in stylized work.

Consistency is another challenge. It’s one thing to generate a single asset. It’s much harder to ensure that multiple characters feel like they belong to the same world, follow the same visual rules, and support a unified art direction.

Material logic also tends to break down under automation. AI can generate surface detail, but understanding how different materials should behave – how roughness, wear, and variation support the design – still requires deliberate control.

This is where the limits become clear. AI can produce options, but it doesn’t decide which ones are correct. And in character work, those decisions are what define the final result.

The Real Impact of AI on Production

When you look at individual tasks, the gains can seem small. Saving an hour on retopology or speeding up UV work doesn’t feel like much on its own. But production isn’t measured per asset – it’s measured across the whole set.

Once you apply that across dozens or hundreds of characters, the difference becomes hard to ignore. Small time savings add up, and the team’s work process changes. Less effort goes into repetitive cleanup, and more goes into iteration, refinement, and keeping the work aligned.

In practice, this shows up as faster feedback cycles. Teams can test decisions earlier, spot issues sooner, and avoid the kind of fixes that usually appear late in production. Artists can move through variations more quickly, test ideas earlier, and catch issues before they spread across the pipeline. It also reduces the number of late-stage fixes, which are usually the most expensive.

At a studio level, this doesn’t necessarily mean smaller teams, but it does shift what those teams focus on. There is less need for purely mechanical work and more emphasis on roles that involve judgment, direction, and integration. Over time, that changes both production structure and expectations for individual artists.

The Skill Shift Inside Character Teams

As work processes change, so do change the expectations from the artists. Not in a dramatic way, but enough to make people focus on different skills and knowledge to be a valuable team member.

In larger productions, a character artist is no longer focused only on sculpting and texturing. There is an expectation to understand how the asset behaves in the engine – how topology affects deformation, how materials react under different lighting conditions, and how LODs influence performance. The work doesn’t stop at “it looks good.” It has to hold up in use.

In smaller teams, the shift is even more visible. The same person might handle modelling, texturing, material setup, and integration. There’s less separation between roles, which means artists need a clearer understanding of the full pipeline, not just their part of it.

What’s changing isn’t the creative core, but everything around it. Artists still shape form, proportion, and identity, but they also have to think about how their work fits into the system – how it’s used, how it performs, and how quickly it can be iterated on. The line between artistic and technical roles is getting thinner, and that shift is starting to influence both team structure and hiring decisions.

Speed vs Identity Trade-off

As production becomes faster, a different problem starts to appear. When more of the pipeline is assisted or partially automated, it becomes easier to produce assets quickly – but harder to keep them distinct.

AI can generate variations, suggest materials, or speed up surface work, but those results often follow similar patterns. If you lean on these tools too much, everything starts to drift toward the same look. The characters still work, nothing is technically wrong, but they stop standing apart from each other.

You feel it more in stylized projects. There, the look depends on clear decisions, not on piling detail. Shape, proportions, material feel – all of it has to stay consistent. Once that control slips, the result can look clean, but not memorable. If that control weakens, the result can feel polished but less distinctive.

Speed is useful, but it introduces a trade-off. The faster the pipeline becomes, the more important it is to decide where that speed actually helps – and where it starts to work against the result. Otherwise, efficiency starts working against identity instead of supporting it.

What Still Defines a Strong Character Art

Even with faster tools, the things that make a character work haven’t really changed. Most of them become obvious only once the asset is in motion or inside the engine.

Silhouette is still one of the first things that breaks. In gameplay, characters are rarely seen up close. They’re moving, partially hidden, or viewed from a distance. If the shape doesn’t read quickly, extra detail doesn’t fix it – it just adds noise.

Proportions show their issues a bit later. A model might look fine in a static view, but once it’s rigged and animated, small mistakes become much more visible. Joints start behaving oddly, movement feels off, and fixing that at this stage is already expensive.

Materials are another point where problems tend to surface. Material detail matters less than how surfaces react under lighting. If roughness or reflectivity is off, it shows immediately – assets stop matching each other, even if each one looks fine on its own.

And then there’s consistency across the whole set. One character can look good in isolation, but production rarely works that way. Assets are always seen together, under the same lighting and performance constraints. If decisions aren’t aligned, the difference becomes noticeable very quickly.

None of this is new, but it becomes more important when everything else gets faster. The less time is spent on technical steps, the more weight shifts to getting these things right from the start.

The Future Is About Control, Not Automation

As more parts of the pipeline become faster, the limiting factor shifts. It’s no longer about how quickly an asset can be produced, but how well the process is controlled.

Automation makes it easier to generate results, but it also makes it easier to introduce inconsistencies. Small differences in materials, proportions, or structure can spread quickly when assets are produced in larger volumes. Without clear guidelines, those differences accumulate.

This is why control becomes more important than speed. When there are no clear limits or shared rules, problems don’t show up immediately – they spread. Small inconsistencies in materials, scale, or structure get carried from one asset to the next, and by the time they’re visible, they’re already hard to fix.

That’s why most of the effort shifts toward alignment. Clear art direction, shared technical boundaries, and regular checks in the engine tend to have a bigger impact than just pushing assets through faster.

Automation helps move faster, but it doesn’t hold everything together. Keeping the result consistent still comes down to how the process is structured and how closely the work is reviewed.

What Data Says About AI and 3D Character Art

Industry data gives a clearer picture than most headlines. According to the GDC State of the Game Industry report, over half of developers say their companies are already using generative AI tools, while around a third use them personally in their work.

So, 52% of the companies use AI, while only a third of employees personally use it. It shows that companies that are most focused on productivity are more willing to adopt AI tools to see if it can increase their productivity. On the other hand, game artists remain skeptical and protective of artistic creativity and copyright, as well as massive layoffs in the industry, and rightfully so.

What’s more interesting is how those tools are used. Most teams apply AI to support workflows – ideation, prototyping, and technical stages – rather than final asset creation. This aligns with production reality, where consistency and control are still hard to automate.

Reports from Unity show a similar direction. 62% of studios are prioritizing faster content pipelines and shorter iteration cycles (obviously), which explains why AI adoption is concentrated mostly in repetitive and time-consuming parts of production.

The gap between expectation and usage is still there. Public perception leans toward fully generated assets, while in practice, AI is used to speed up specific steps inside the pipeline.

And that distinction is important, because it shows where the real value is – not in replacing artists, but in reducing the cost of getting assets production-ready.

When AI is not used as purely generative from vast volumes of existing art, it also solves the problem of copyright. Even with generative AI, some companies solve the problem by training AI on their portfolio. That way, AI uses only the assets that the company has copyright for.

What 3D Character Artists Think Of AI in Their Work?

From the very start of the rise of generative AI, artists had many concerns about it. But just like any innovation that has passed its peak of the hype curve, it has found good use cases beyond the thoughtless generation of art assets.

Surely, there are downsides. Junior artists may lose part of the skills they could have gained while training on boring repetitive tasks that are now taken over by AI. The entry barrier for the first job is higher now.

Some artists are happy that the donkey job is reduced, while others are not. Low-quality art has existed long before AI, and it will exist later. The job of the artists is to choose their tools wisely to deliver the best result, be it paper and a pencil or an AI-assisted plug-in in a 3D editor.

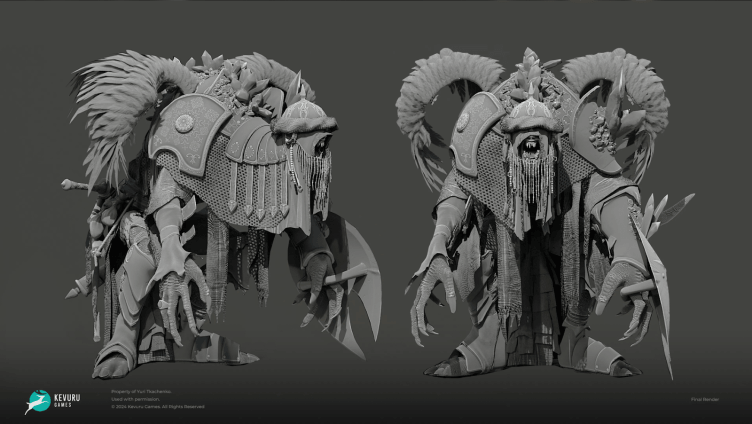

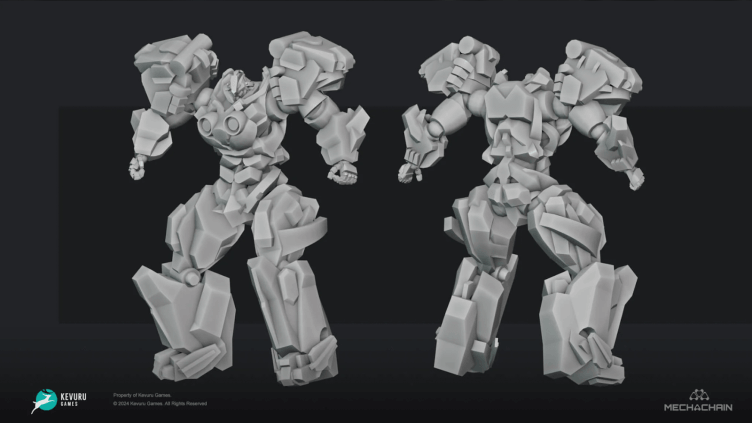

Here is what Olga Andrianova, head of the 3D department at Kevuru Games, says:

“What matters in the end is whether the art is good or not. If the art director knows their work and doesn’t lower the standards, it will be good, independently of whether AI was used in the process or not.”

Should Clients Be Concerned About AI in Production?

For clients, the question is not whether a studio uses AI. At this point, many do, in one form or another. The more relevant question is how those tools are used and what place they have in the process.

AI can speed up certain stages and reduce production overhead, but it doesn’t ensure quality on its own. That still comes down to the team – their standards, how they review the work, and how closely they keep things aligned. A structured pipeline with clear art direction will produce consistent results, regardless of the tools involved.

In that sense, AI is a neutral factor. It can help when used with control, or create issues when it replaces it. The difference usually becomes visible in the final work – in consistency, clarity, and attention to detail.

For clients, a more practical approach is to look at how a studio operates. Do they have defined pipelines, review stages, and quality control? Do they explain how AI fits into their workflow? Is there a clear policy around its use?

In the end, it comes down to the same thing it always has – trust in the team and their ability to deliver. The tools may change, but responsibility for the result does not.